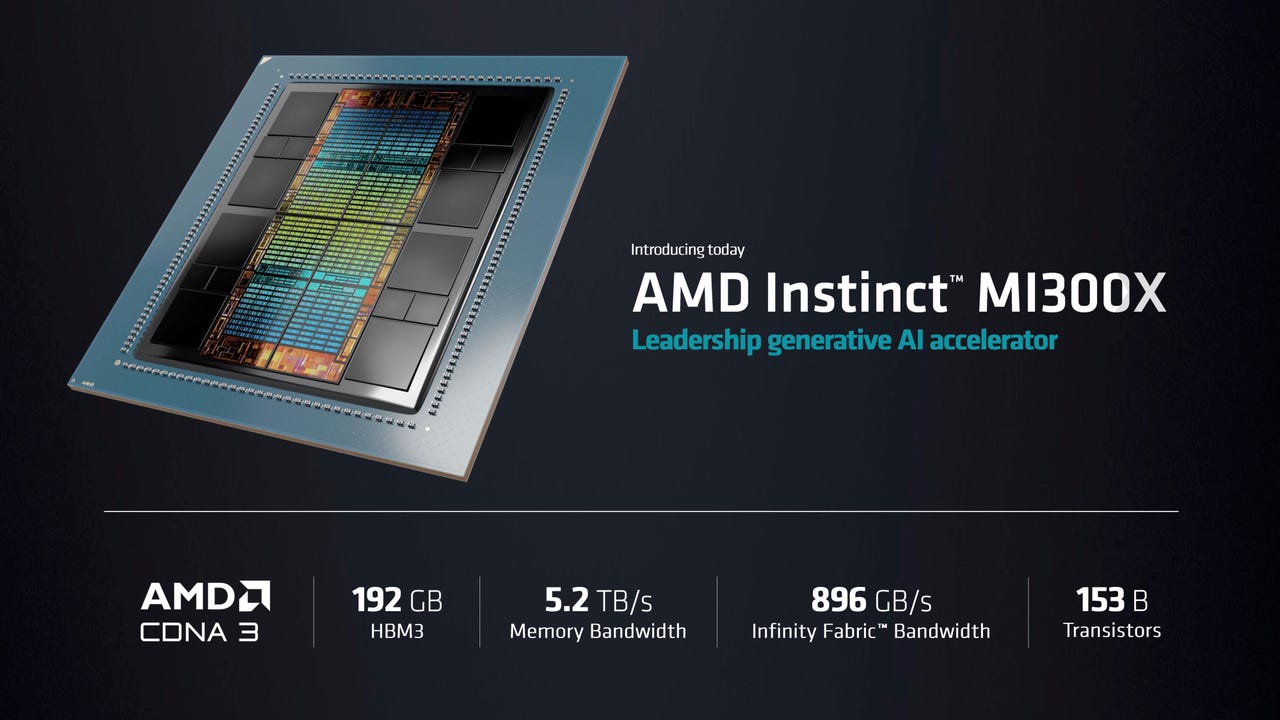

AMD unveils MI300x AI chip as ‘generative AI accelerator’

AMD’s Instinct MI300X GPU features multiple GPU “chiplets” plus 192 gigabytes of HBM3 DRAM memory, and 5.2 terabytes per second of memory bandwidth. The company said it is the only chip that can handle large language models of up to 80 billion parameters in memory. AMD

Advanced Micro Devices CEO Lisa Su on Tuesday in San Francisco unveiled a chip that is a centerpiece in the company’s strategy for artificial intelligence computing, boasting its enormous memory and data throughput for so-called generative AI tasks such as large language models.

The Instinct MI300X, as the part is known, is a follow-on to the previously announced MI300A. The chip is really a combination of multiple “chiplets,” individual chips that are joined together in a single package by shared memory and networking links.

Su, onstage for an invite-only audience at the Fairmont Hotel in downtown San Francisco, referred to the part as a “generative AI accelerator,” and said the GPU chiplets contained in it, a family known as CDNA 3, are “designed specifically for AI and HPC [high-performance computing] workloads.”

The MI300X is a “GPU-only” version of the part. The MI300A is a combination of three Zen4 CPU chiplets with multiple GPU chiplets. But in the MI300X, the CPUs are swapped out for two additional CDNA 3 chiplets.

Also: Nvidia unveils new kind of Ethernet for AI, Grace Hopper ‘Superchip’ in full production

The MI300X increases the transistor count from 146 billion transistors to 153 billion, and the shared DRAM memory is boosted from 128 gigabytes in the MI300A to 192 gigabytes.

The memory bandwidth is boosted from 800 gigabytes per second to 5.2 terabytes per second.

“Our use of chiplets in this product is very, very strategic,” said Su, because of the ability to mix and match different kinds of compute, swapping out CPU or GPU.

Su said the MI300X will offer 2.4 times the memory density of Nvidia’s H100 “Hopper” GPU, and 1.6 times the memory bandwidth.

“The generative AI, large language models have changed the landscape,” said Su. “The need for more compute is growing exponentially, whether you’re talking about training or about inference.”

To demonstrate the need for powerful computing, Sue showed the part working on what she said is the most popular large language model at the moment, the open source Falcon-40B. Language models require more compute as they are built with greater and greater numbers of what are called neural network “parameters.” The Falcon-40B consists of 40 billion parameters.

The MI300X, she said, is the first chip that is powerful enough to run a neural network of that size, entirely in memory, rather than having to move data, back-and-forth to and from external memory.

Su demonstrated the MI300X creating a poem about San Francisco using Falcon-40B.

“A single MI300X can run models up to approximately 80 billion parameters” in memory, she said.

“When you compare MI300X to the competition, MI300X offers 2.4 times more memory, and 1.6 times more memory bandwidth, and with all of that additional memory capacity, we actually have an advantage for large language models because we can run larger models directly in memory.”

To be able to run the entire model in memory, said Su, means that, “for the largest models, that actually reduces the number of GPUs you need, significantly speeding up the performance, especially for inference, as well as reducing the total cost of ownership.”

“I love this chip, by the way,” enthused Su. “We love this chip.”

“With MI300X, you can reduce the number of GPUs, and as model sizes keep growing, this will become even more important.”

“With more memory, more memory bandwidth, and fewer GPUs needed, we can run more inference jobs per GPU than you could before,” said Su. That will reduce the total cost of ownership for large language models, she said, making the technology more accessible.

Also: For AI’s ‘iPhone moment’, Nvidia unveils a large language model chip

To compete with Nvidia’s DGX systems, Su unveiled a family of AI computers, the “AMD Instinct Platform.” The first instance of that will combine eight of the MI300X with 1.5 terabytes of HMB3 memory. The server conforms to the industry standard Open Compute Platform spec.

“For customers, they can use all this AI compute capability in memory in an industry-standard platform that drops right into their existing infrastructure,” said Su.

Unlike MI300X, which is only a GPU, the existing MI300A is going up against Nvidia’s Grace Hopper combo chip, which uses Nvidia’s Grace CPU and its Hopper GPU, which the company announced last month is in full production.

MI300A is being built into the El Capitan supercomputer under construction at the Department of Energy’s Lawrence Livermore National Laboratories, noted Su.

The MI300A is being shown as a sample currently to AMD customers, and the MI300X will begin sampling to customers in the third quarter of this year, said Su. Both will be in volume production in the fourth quarter, she said.

You can watch a replay of the presentation on the Website set up by AMD for the news.

Pingback: ภาพระบายสี

Pingback: my show

Pingback: Aviator

Pingback: เน็ต บ้าน ais

Pingback: mostbet